Has your agency or in-house team told you that influencer marketing is "top of the funnel" and really can't be expected to drive business results? If so, it's likely that they are using outdated influencer marketing methods that are sorely in need of an update.

Below I've listed three programs that we've worked on that have driven real business results for our clients along with the challenges we had measuring these results. Web attribution is still a very tricky business (look here for recent articles where two of the biggest digital brands are still struggling with it), but that doesn't mean we shouldn't be working to measure what we can.

Influencer Examples generating Real Business Results

209% More Units Sold At Retail Than Benchmark Event

Everyone likes a good sales case study and this one is a beauty. For a major beauty brand sold at a major drug store chain, we had a great opportunity. Promote a product that was about to offer a BOGO (buy one get one free) promotion that was the same value they had offered three months prior at the same retailer. We were even pushing to sales on the same day of the week as the last time.

When we were done with our program, we had moved over 200% more units than the last time they offered the same incentive.

Key considerations:

A clean benchmark for the program was the key to measuring the success here. Often at retail there are a number of confounding variables such as seasonality, natural consumer buying cycles, additional levels of promotion such as end caps and circulars and more. If possible, craft an influencer program specifically to take advantage of a clean benchmark.

We also needed access to sales data from the retailer. Not all manufacturers get that data and without it it's pretty much impossible to measure any sales lift.

$100,000+ in Attributable Online Sales

A four-week program driving over six-figures worth of sales is not a bad way to wrap up a campaign that cost quite a bit less than that, but we also learned a tremendous amount along the way.

Using the Facebook pixel and boosting the influencers' content (with daily optimization) we were able to see people who were exposed to the content and then track whether they made a purchase on the brand website regardless of whether they clicked. We tracked a 24-hour window, a 7-day window and a 28-day window for both clickers and exposed non-clickers.

Here's the most fascinating part: 67% of the buyers purchased within 24 hours of exposure to our influencer content but without clicking the link. Their response was nearly immediate, but the response was to open a browser window and type in the brand name or do a Google search for the brand. Only 3% of buyers bought off a click in the first 24 hours. This shows just how much of a campaign performance last-touch attribution can be missing.

And yes, the brand experienced a sudden increase in organic search and direct traffic to the website with no other obvious explanation. There is no such thing as a neat purchase funnel.

Key Considerations:

While last touch attribution can under-count performance, the Facebook pixel can significantly over-count it. Facebook by default gives 100% credit to all sales made from exposed audiences within the 28-day window regardless of what other marketing they may have been exposed to.

This is both a natural limit (how can Facebook possibly know if your customers were exposed to Google ads or TV ads or something else along the way) and an issue Facebook has no incentive to solve. Everyone likes to see big numbers, including Facebook.

What we've done for clients like this is set up a formula before the campaign. For example, we might give 100% sales credit to 24-hour windows, dropping down to maybe 25% credit for 28-day windows. These can also vary based on click or exposed. Agreeing to this in advance is a great way to set apples to apples benchmarks to use on multiple campaigns.

A Campaign To Remember (Really)

The first two measurements are measurable sales lift, which is fantastic. But what about products for which that data isn't available? Take car sales for example. When you have a product that is expensive and purchased only every 5-7 years, expecting to measure sales from a single influencer program is unrealistic.

Is it possible to interpret social media signals as a proxy for sales intent? As it happens, it is. Facebook has a fair amount of research that shows that engagement (likes/comments/shares) does not correlate with increased sales. Nor does the reach of an ad campaign ("We got 1 BILLION impressions!") correlate with increased sales. But what does correlate with sales is if people remember your program.

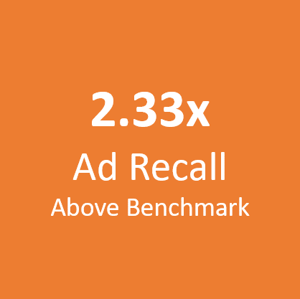

In some cases, therefore, we use Estimated Ad Recall to see how our campaigns are doing relative to the 6% benchmark that we use. For one brand that sells expensive products, we were able to see that our campaign shattered benchmarks, coming in more than 2x higher than usual. This meant that our campaign was standing out much better than similar investments on the platform.

Key Considerations:

In order to use this measurement, you must boost high performing influencer content, as we do for every campaign. The organic reach can't be measured, but since we only boost the highest performing content anyway, we're getting our best influencer content in front of the best audiences. As a Facebook metric, this only works on FB and Instagram, but it's an efficient, empirical measure that doesn't take a massive spend to determine.

More Ways to Measure Influencer

If you'd like to know more ways to measure influencer marketing, we've got both this white paper (25 Ways to Measure) and this webinar (9 Influencer Marketing Metrics for Your C-Suite) that should provide some value.

We'll be back with more examples in the coming weeks, but feel free to contact us below if you're looking for some specific answers.

.png?width=504&height=360&name=Carusele%20logo%20%C2%AE%20logo%20Color%20(2).png)